March 7, 2026

Its actually really interesting to hear about COBOL. It was far before my time, so it was interesting to hear about the goal of it.

I also have been extremely vocal about the first AI boom in the 60s, so it was really comforting to have a senior person in the industry talk about it

as well.

"By the mid-1970s, reality had intervened."

Now that was also something I giggled at. I would say the same thing is in store soon....

"Software is not just code. It is a precise specification of behavior."

Another banger quote. I am really starting to love this guy. Insightful.

Talking on AI-trained AI is really funny. Because it has been really documented. About 2 months after release, models reach peak accuracy and benefit, and then as it

continues to back propogate train off the internet (which largely contains AI generated content now), it declines. Which logically makes sense if you think of

feeding an image to a noise generator in a loop just out puts nonsense noise. So to feed it to a good guessing machine, youre gonna get a lot of guesses.

Which maybe raises the question of why we keep the weights open for backpropogation when we release the model. Why not freeze the weights? Seems just like a security or

quality issue waiting to happen.

Misconceptions:

1 - Anyone will be able to vibe code

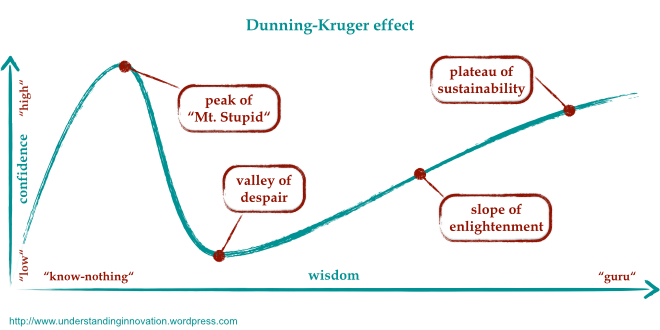

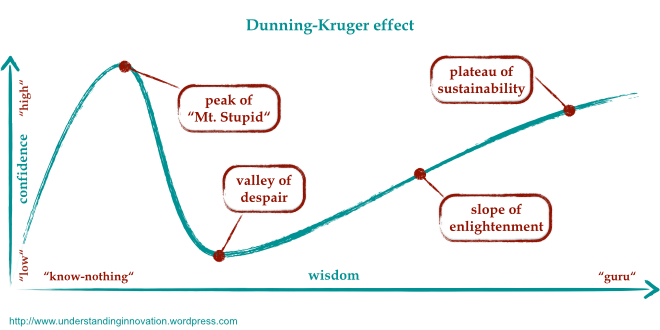

No. Just no. Please refer to the Dunning Kruger Effect.

The people who think or say this about vibe coding are either at the peak of Mt. Stupid or think they can take advantage of you, because you

are at the peak of Mt. Stupid.

"Software is not just code. It is a precise specification of behavior."

I highlighted this above, but it is worth repeating. No one's job title is "coder". Coding is like 25-50% of any software job. The rest of design, architecture, logic,

testing, debugging, etc., are not worth the risk of AI failure. You cannot make an educated guess about these things and afford to be wrong 1/15 times.

2 - AI will take over the world

Again, harping on the fundamental misunderstanding in how AI rechnologies work: they do not think. They make guesses. They will never be sentient. The fundamental

mismatch of pattern recognition and congitive thinking is the size of the Grand Canyon. They are not even in the same ballpark. Besides a massive breakthrough in

quantum or algorithmic design, there is no way to get from one to the other.

3 - Why do X/Y/Z if AI can just do it?

There are two main genres to this argument. The first is artforms. And that is silly, so I won't even begin to respond more than Oscar Wilde's famous quote,

"All art is quite useless." and more than that: "A work of art is useless as a flower is useless. A flower blossoms for its own joy."

The second is more about utility of an actifity with less joy in the journey. Why learn to do math if a calculator exists? Well the answer in this case is that

1/20 times the calculator is wrong. And you need to be able to recognize when that is the case. When the calculator tells you 29+20 = 100, you need to be able to

tell that that doesn't sound right, and do the hand arithmetic of 20+29.